This is goodbye!

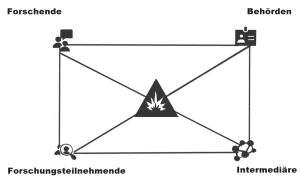

The time has come: demoRESILdigital is ending, after all this time. During the project’s run, we managed to contribute in many different ways to the research on democratic resilience and how it can be nurtured.

A list of all our papers can be found here: https://www.demoresildigital.uni-muenster.de/publications/

In the future, Dr. Lena Frischlich is going to work at LMU München, in the Department of Media and Communication. In the summer semester, she serves as an interim professor for empirical methods in communication science before she will join the team by Prof. Dr. Diana Rieger ( https://www.en.ifkw.uni-muenchen.de/research/chairs/rieger/index.html) in the fall. You can contact her using her new email address (lena.frischlich@ifkw.lmu.de).

Lena Clever is staying at Uni Münster, but her focus has shifted after completing her thesis. She will be working as the Program Manager for Information Systems at the Department of Information Systems and can be reached at the following email address: lena.clever@wi.uni-muenster.de.

Tim Schatto-Eckrodt continues working on his thesis at Uni Hamburg, in the Department of Digital Communication and Sustainability and is available for questions at the following email address: tim.schatto-eckrodt@uni-hamburg.de.